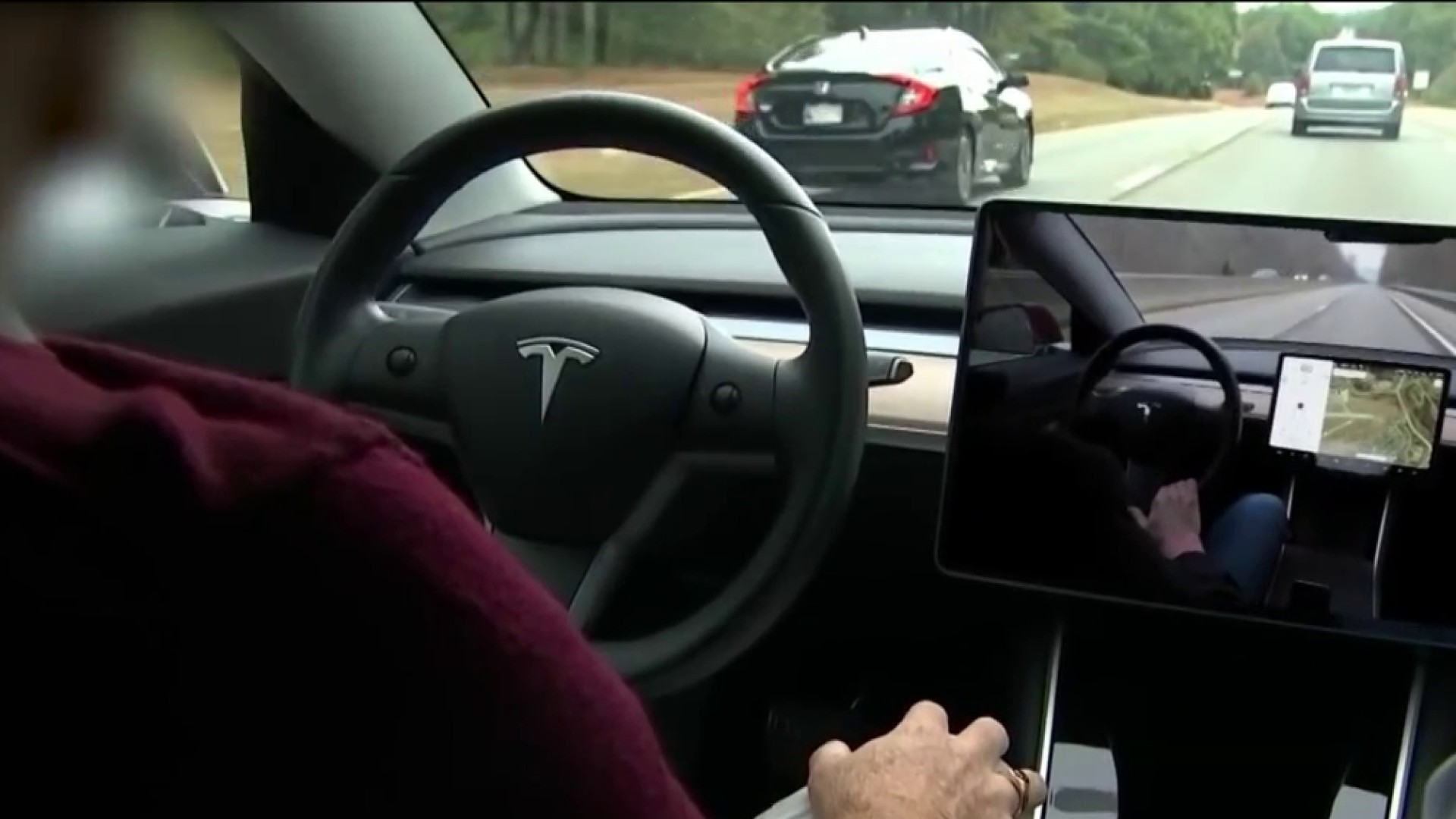

The U.S. government has opened a formal investigation into Tesla’s Autopilot partially automated driving system after a series of collisions with parked emergency vehicles, including a 2019 crash involving a Massachusetts state trooper while he was pulled over on the highway.

The investigation covers 765,000 vehicles, almost everything that Tesla has sold in the U.S. since the start of the 2014 model year. Of the crashes identified by the National Highway Traffic Safety Administration as part of the investigation, 17 people were injured and one was killed.

WATCH ANYTIME FOR FREE

Stream NBC10 Boston news for free, 24/7, wherever you are. |

NHTSA says it has identified 11 crashes since 2018 in which Teslas on Autopilot or Traffic Aware Cruise Control have hit vehicles at scenes where first responders have used flashing lights.

The investigation covers Tesla's entire current model lineup, the Models Y, X, S and 3 from the 2014 through 2021 model years.

Get updates on what's happening in Boston to your inbox. Sign up for our News Headlines newsletter.

In the Massachusetts incident, a state trooper had stopped 21-year-old college student Maria Smith along Route 24 in West Bridgewater. As the trooper approached the driver’s side window, a Tesla collided with the back of the MSP vehicle while its lights were flashing.

"It just happened so quick," Smith told NBC10 last year. "Before I knew it, my car was flying forward. I looked behind me, and my whole back windshield was blown out. There was glass in my hair."

According to court documents, the driver of the Tesla told police his vehicle was in Autopilot mode prior to the collision and he “must not have been paying attention.”

The National Transportation Safety Board, which also has investigated some of the Tesla crashes, has recommended that NHTSA and Tesla limit Autopilot’s use to areas where it can safely operate. The NTSB also recommended that NHTSA require Tesla to have a better system to make sure drivers are paying attention. NHTSA has not taken action on any of the recommendations. The NTSB has no enforcement powers and can only make recommendations to other federal agencies such as NHTSA.

Autopilot has frequently been misused by Tesla drivers, who have been caught driving drunk or even riding in the back seat while a car rolled down a California highway.

In 2019, a Newburyport driver told NBC10 Boston he fell asleep behind the wheel while his Tesla traveled in Autopilot mode along Route 2. The driver shared his story to highlight some of the ways Tesla owners were taking detours around the safety warning system meant to keep their hands on the wheel.

The NTSB has sent investigative teams to 31 crashes involving partially automated driver assist systems since June of 2016. Such systems can keep a vehicle centered in its lane and a safe distance from vehicles in front of it. Of those crashes, 25 involved Tesla Autopilot in which 10 deaths were reported, according to data released by the agency.

Tesla and other manufacturers warn that drivers using the systems must be ready to intervene at all times. Teslas using the system have crashed into semis crossing in front of them, stopped emergency vehicles and a roadway barrier.

A message was left early Monday seeking comment from Tesla, which has disbanded its media relations office.

The crashes into emergency vehicles cited by NHTSA began on Jan. 22, 2018, in Culver City, California, near Los Angeles when a Tesla using Autopilot struck a parked firetruck that was parked partially in the travel lanes with its lights flashing. Crews were handling another crash at the time.

Since then, the agency said there were crashes in Laguna Beach, California; Norwalk, Connecticut; Cloverdale, Indiana; West Bridgewater, Massachusetts; Cochise County, Arizona; Charlotte, North Carolina, Montgomery County, Texas; Lansing, Michigan; and Miami, Florida.

“The investigation will assess the technologies and methods used to monitor, assist and enforce the driver's engagement with the dynamic driving task during Autopilot operation,” the NHTSA said in its investigation documents.

In addition, the probe will cover object and event detection by the system, as well as where it is allowed to operate. NHTSA says it will examine “contributing circumstances” to the crashes, as well as similar crashes.

An investigation could lead to a recall or other enforcement action by NHTSA.

“NHTSA reminds the public that no commercially available motor vehicles today are capable of driving themselves,” the agency said in a statement. “Every available vehicle requires a human driver to be in control at all times, and all state laws hold human drivers responsible for operation of their vehicles.”

The agency said it has “robust enforcement tools” to protect the public and investigate potential safety issues, and it will act when it finds evidence “of noncompliance or an unreasonable risk to safety.”

In June, NHTSA ordered all automakers to report any crashes involving fully autonomous vehicles or partially automated driver assist systems.

Sign up for our Breaking newsletter to get the most urgent news stories in your inbox.

The measures show the agency has started to take a tougher stance on automated vehicle safety than in the past. It has been reluctant to issue any regulations of the new technology for fear of hampering adoption of the potentially life-saving systems.

Shares of Tesla Inc., based in Palo Alto, California, fell 3.5% at the opening bell Monday.

Auto safety watchdog Sean Kane called the NHTSA investigation a step in the right direction, but he told NBC10 Boston the technology should’ve been regulated prior to being rolled out on public roadways.

“You’ve got the tail wagging the dog,” Kane said. “You’re trying to chase down those problems and fix it after that fact. And after the fact means deaths and injuries are occurring on the roadways that could’ve been prevented.”

The Associated Press contributed to this report.