Instagram announced on Monday its release of two new features to help reduce cyber bullying on the platform.

The company said it has been using artificial intelligence to detect bullying and other harmful content on posts, and has created new features designed to prevent bullying on Instagram and empower targets to stand up for themselves.

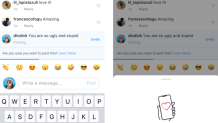

The first feature, powered by Al, notifies users if the comment they are posting is inappropriate. Before the message posts, a prompt asks, “are you sure you want to post this?” Instagram said in a release that early tests have shown that this intervention feature has encouraged people to undo their harmful comment.

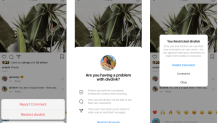

The second feature allows users to protect their account from unwanted interactions using a “Restrict” button. Young people are hesitant to block, unfollow, or report a bully because it could escalate a situation, particularly if they interact with them in person, according to Instagram. Instead of blocking or unfollowing a user, where the person is able to see that they have been removed, “Restrict” allows you to minimize your interactions with the bully without notifying them.

“Restrict” also insures that the user won’t be able to see when you’re active on Instagram, if you’ve read their direct messages, or whether you’ve deleted their comments. Their comments on your post will still be visible to both you and them, but not to other users unless you choose to keep them public.

U.S. & World

“It’s our responsibility to create a safe environment on Instagram,” Head of Instagram Adam Mosseri said in a release. “These tools are grounded in a deep understanding of how people bully each other and how they respond to bullying on Instagram, but they’re only two steps on a longer path.”